Generative UI Notes

I’m really interested in this emerging idea that the future of web design is Generative UI Design .

We see hints of this already in products, like Figma Sites , that tout being able to create websites on the fly with prompts.

Putting aside the clear downsides of shipping half-baked technology as a production-ready product (which is hard to do), the angle I’m particularly looking at is research aimed at using Generative AI (or GenAI) to output personalized interfaces.

It’s wild because it completely flips the way we think about UI design on its head.

Rather than anticipating user needs and designing around them, GenAI sees the user needs and produces an interface custom-tailored to them.

In a sense, a website becomes a snowflake where no two experiences with it are the same.

Again, it’s wild.

I’m not here to speculate, opine, or preach on Generative UI Design (let’s call it GenUI for now).

Just loose notes that I’ll update as I continue learning about it.

Generative UI is a new modality where the AI model generates not only content, but the entire user experience.

This results in custom interactive experiences, including rich formatting, images, maps, audio and even simulations and games, in response to any prompt (instead of the widely adopted “walls-of-text”).

A generative UI (genUI) is a user interface that is dynamically generated in real time by artificial intelligence to provide an experience customized to fit the user’s needs and context.

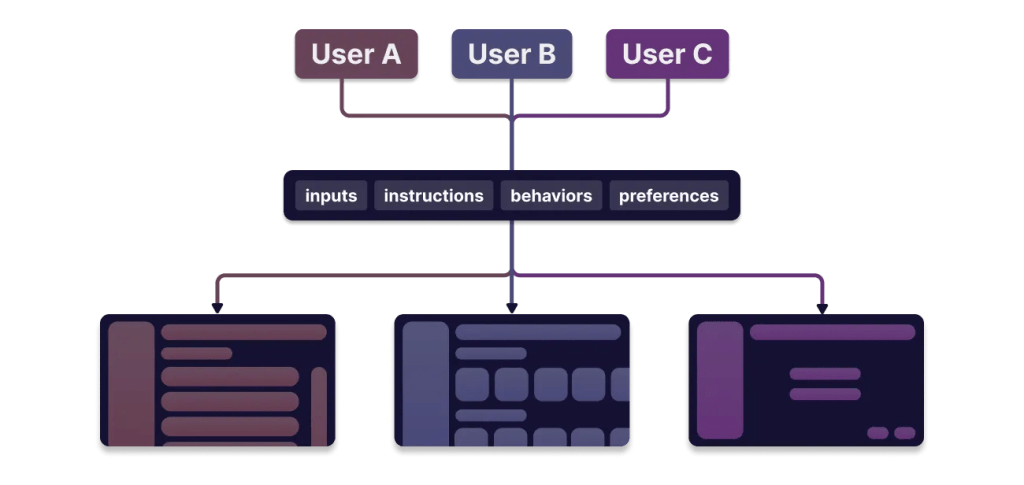

A Generative User Interface (GenUI) is an interface that adapts to, or processes, context such as inputs, instructions, behaviors, and preferences through the use of generative AI models (e.g.

LLMs) in order to enhance the user experience.

Put simply, a GenUI interface displays different components, information, layouts, or styles, based on who’s using it and what they need at that moment.